Today we are going to learn how to use iloc to get values from Pandas DataFrame and we are going to compare iloc with loc.…

A Tip A Day? Yes! We know that this website mostly speaks about #MachineLearning, #DataScience, #Python & related topics. Now we are going to start…

We know that we can replace the nan values with mean or median using fillna(). What if the NAN data is correlated to another categorical…

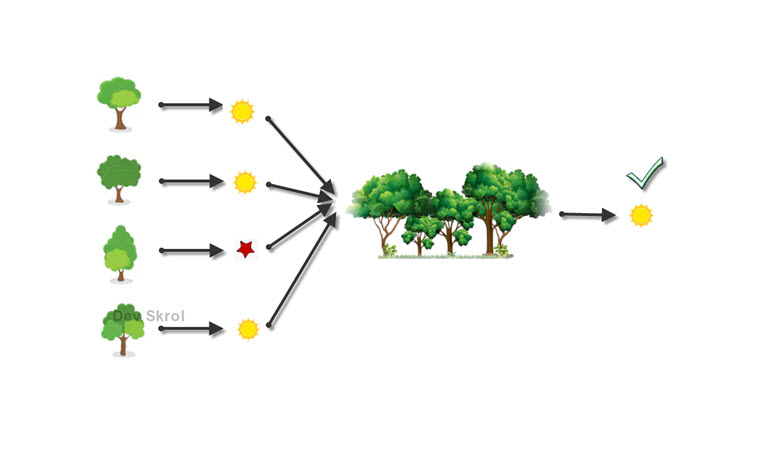

How Random Forest works? How to overcome Overfitting? What is Ensemble Learning? Why we need Random Forest? What is Bagging/Bootstrap Aggregation?

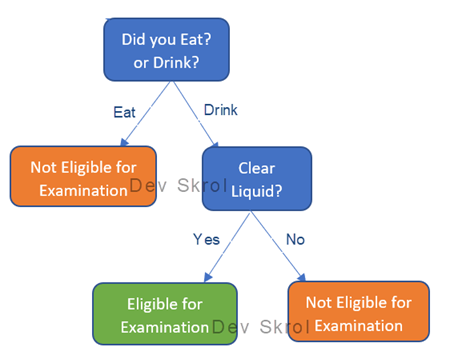

In this article we will learn about what is a Decision Tree? How it works? Why Decision Tree Overfitts & How can we resolve it?

In machine learning, training a model and testing it is definitely not an end. Should we run this source code of training, tuning everything again…

In this article we will be researching on the Titanic Dataset with Logistic Regression and Classification Metrics. Lets see how to do logistic regression with…

Logistic Regression – Cost Function, Error Metrics, Precision, Recall, Specificity, ROC Curve, F-Score, Observations from ROC Curve

Logistic Regression – Transformation of Linear to Logistic – Explained with images and examples – Sigmoid Function

In this article, we will see how to join 2 Numpy arrays using built-in funcitons. Numpy – Joining two numpy arrays stack — Joins arrays…